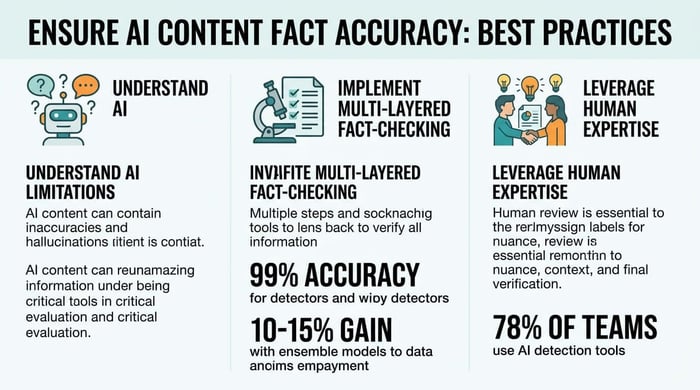

Ensure AI Content Fact Accuracy: Best Practices

Aidan Buckley

SEO

Aidan Buckley

SEO

March 19th, 2026

14 minute read

Table of Contents

- Understand the Limitations of AI Content Generation

- Implement a Multi-Layered Fact-Checking Process

- Leverage Human Expertise for Nuance and Verification

- Utilize Specialized AI Fact-Checking Tools

- Establish Clear Editorial Guidelines and Workflows

- Continuously Monitor and Adapt Your Accuracy Protocols

- Conclusion

The promise of AI-driven content generation is immense, with 94% of marketers planning to use AI for content creation in 2026. However, this efficiency comes with a significant challenge: ensuring factual accuracy. AI hallucinations, where models generate plausible but incorrect information, are a growing concern, with leading AI chatbots spreading false information significantly often on controversial topics. The stakes are high; inaccurate content can erode trust, damage brand reputation, and lead to costly compliance issues, especially with new regulations like the EU AI Act imposing fines up to 6% of global revenue for unlabelled AI-generated content. This guide provides a comprehensive framework for businesses to navigate the complexities of AI content generation, blending advanced AI tools with essential human oversight to maintain the highest standards of accuracy and trustworthiness.

- Understand AI Limitations: Recognize the inherent weaknesses of AI, including hallucination risks and the need for human oversight.

- Implement Multi-Layered Fact-Checking: Combine automated and manual verification processes to ensure comprehensive accuracy.

- Leverage Human Expertise: Integrate subject matter experts and editorial review boards for critical thinking and final content validation.

- Utilize Specialized AI Tools: Deploy advanced AI content detection, verification, and research tools to enhance accuracy workflows.

- Establish Clear Guidelines: Develop comprehensive style guides, fact-checking protocols, and define roles for consistent quality.

- Continuously Monitor and Adapt: Track AI performance, conduct regular audits, and stay updated on evolving AI advancements and regulations.

Understand the Limitations of AI Content Generation

Despite rapid advancements, AI models are not infallible. Recognizing their inherent weaknesses is the first step toward building a robust accuracy framework, especially given that 94% of marketers plan to use AI for content creation in 2026. Ignoring these limitations can lead to significant reputational and financial risks.

Acknowledge AI Hallucinations and Inaccuracies

AI models, particularly large language models (LLMs), are prone to "hallucinations"—generating information that is plausible but factually incorrect or entirely fabricated. This is a significant challenge, with leading AI chatbots spreading false information significantly often when prompted with questions about controversial news topics, nearly twice the rate observed a year earlier. A 2025 Duke student study found that GenAI accuracy varies by subject, highlighting the inconsistency and unpredictability of AI outputs across different domains.

Key indicators of AI hallucinations include:

- Fabricated statistics or citations.

- Misinterpretation of context.

- Generation of non-existent entities or events.

- Contradictory information within the same output.

- Overly confident assertions about uncertain facts.

Recognize the Need for Human Oversight

While AI can generate content at scale, it lacks human critical thinking, contextual understanding, and ethical judgment. AI should assist, not replace, humans. Human verification and source checks remain crucial, as evidenced by GPTZero's Hallucination Check flagging flawed citations in AI-generated content. This underscores the necessity of human intervention to prevent the spread of misinformation and ensure AI content quality and factual accuracy.

The critical role of human oversight involves:

- Applying critical thinking to AI-generated claims.

- Verifying sources and cross-referencing information.

- Ensuring ethical considerations are met.

- Adding nuanced understanding and context.

- Making final editorial judgments.

Stay Informed on Evolving Regulatory Landscapes

The regulatory environment for AI-generated content is rapidly evolving. The EU AI Act, enforceable from August 2026, requires labeling of AI-generated content and disclosure of synthetic interactions, with fines up to 6% of global revenue for non-compliance. California laws, effective January 1, 2026, impose civil penalties for misleading AI use and require AI developers to provide free detection tools and embed latent disclosures, with fines of significantly high amounts per violation for non-compliance with AB 853. Staying abreast of these regulations is vital for legal compliance and maintaining public trust.

Key regulatory aspects to monitor include:

- Mandatory labeling of AI-generated content.

- Disclosure requirements for synthetic interactions.

- Penalties for misleading AI use.

- Requirements for AI developers to provide detection tools.

- Embedding latent disclosures within AI outputs.

Implement a Multi-Layered Fact-Checking Process

A single point of failure in fact-checking is unacceptable. A multi-layered approach combines automated and manual checks to ensure comprehensive accuracy, especially as AI-generated content now accounts for an estimated 15-20% of new online content. This comprehensive strategy is vital for maintaining credibility.

Ground AI with Verified Data Sources

To reduce AI hallucinations, instruct models to "ONLY use the text provided above" rather than relying on their internal training data. Implement Retrieval-Augmented Generation (RAG) to tether AI outputs to real-time, verified data, linking directly to documentation for factual claims. This best practice helps prevent the AI from fabricating information, a common issue where leading AI chatbots spread false information significantly often.

"The usage of LLMs in papers at AI conferences is rapidly evolving, and NeurIPS is actively monitoring developments. In previous years, we piloted policies regarding the use of LLMs, and in 2025, reviewers were instructed to flag hallucinations."

Strategies for grounding AI include:

- Using Retrieval-Augmented Generation (RAG) for real-time data.

- Providing explicit instructions to use only provided text.

- Linking directly to authoritative documentation.

- Fine-tuning models on high-quality, verified datasets.

- Implementing knowledge graphs for structured data.

Employ Chain-of-Thought Prompting

Encourage AI to "explain your reasoning step-by-step" to minimize logic leaps and improve accuracy. This technique forces the AI to show its work, making it easier to identify potential errors or unsupported claims before they make it into the final content. It also helps in auditing the AI's decision-making process, a crucial step given that organizations using AI have experienced negative consequences, with one-third from AI inaccuracy.

Benefits of Chain-of-Thought prompting:

- Reveals the AI's reasoning process.

- Helps identify logical fallacies.

- Facilitates human intervention at critical junctures.

- Improves the auditability of AI outputs.

- Enhances overall accuracy and reliability.

Conduct Human Verification and Source Cross-Referencing

After initial AI generation and automated checks, human fact-checkers must verify all critical claims against authoritative sources. This includes cross-checking against databases, official reports, and expert opinions. GPTZero's Hallucination Check, for example, flags flawed citations, demonstrating the persistent need for human review of AI-generated sources. This essential human layer ensures the integrity of the content.

Key steps in human verification:

- Cross-referencing claims with multiple authoritative sources.

- Consulting databases and official reports.

- Seeking expert opinions for complex topics.

- Manually checking all citations and references.

- Applying human judgment to ambiguous information.

Leverage Human Expertise for Nuance and Verification

While AI excels at speed and scale, human expertise provides the critical thinking, ethical judgment, and contextual understanding necessary for truly accurate and trustworthy content. This blend is particularly important as 89% of top-ranking AI content features human editorial signatures.

Integrate Subject Matter Experts (SMEs)

Involve SMEs in the content creation and review process. Their deep understanding of the topic can catch subtle inaccuracies or misinterpretations that AI might miss. For example, a legal expert reviewing AI-generated legal content can ensure compliance with specific regulations and nuances of the law. This is especially important given that GenAI accuracy varies by subject, making domain-specific knowledge invaluable.

How SMEs enhance accuracy:

- Identify subtle inaccuracies or misinterpretations.

- Ensure compliance with industry-specific regulations.

- Add nuanced context and deeper insights.

- Validate technical details and jargon.

- Provide authoritative endorsement for content.

Establish Editorial Review Boards

Create dedicated editorial review boards responsible for final content approval. These boards, composed of experienced editors and fact-checkers, ensure that all content adheres to the highest standards of accuracy, tone, and brand voice. Their role is to provide the ultimate human gatekeeping function before publication, a practice that contributes to the quality improvements reported by marketers using AI tools.

Functions of an editorial review board:

- Final content approval before publication.

- Ensuring adherence to accuracy standards.

- Maintaining consistent brand voice and tone.

- Providing a critical human gatekeeping function.

- Resolving complex factual disputes.

Provide Continuous Training for Human Teams

As AI technology evolves, so too must the skills of human content teams. Provide ongoing training on AI capabilities, limitations, and best practices for fact-checking AI-generated content. This includes training on advanced prompting techniques, using AI detection tools, and understanding regulatory changes. Such training ensures that human teams can effectively manage and verify content generated by AI content creation tools.

Key training areas for human teams:

- Advanced AI prompting techniques.

- Effective use of AI detection tools.

- Understanding evolving AI capabilities and limitations.

- Staying updated on regulatory changes.

- Best practices for human-AI collaboration.

Utilize Specialized AI Fact-Checking Tools

The market for AI fact-checking and detection tools is rapidly expanding, projected to reach $1.2 billion by 2027. Leveraging these specialized tools is crucial for efficient and effective accuracy assurance.

Deploy AI Content Detection and Plagiarism Tools

Integrate advanced AI content detection tools to identify AI-generated text and potential plagiarism. Tools like TextShift, with 99.18% accuracy using ensemble models, and Originality.ai, with 94% accuracy and a low false positive rate, can help ensure content originality and flag suspicious passages. Other tools like Copyleaks and GPTZero also offer high accuracy rates, making them indispensable for vetting content.

| Metric / Tool | Value | Source |

|---|---|---|

| AI Content Detection Accuracy | ||

| TextShift (ensemble) | 99.18% | textshift.blog |

| Originality.ai | 94% | gptzero.me |

| Copyleaks | 92% | gptzero.me |

| Turnitin | 90% | textshift.blog |

| GPTZero | 85% | gptzero.me |

| Jottler (AI SEO Agent) | 98% (AI-generated content detection) | Internal Data |

| Single-model detectors (average) | 80-90% | textshift.blog |

| Ensemble vs. Single-model (outperformance) | 10-15% | textshift.blog |

| Industry False Positive Rate (average) | 5-15% | textshift.blog |

Using Jottler to Enhance Verification and Research

Beyond detection, some AI tools are designed to assist with verification. For instance, platforms like Jottler, an autonomous AI SEO Agent, incorporate deep research and anti-hallucination fact-checking from 14+ sources directly into its content generation process. This proactive approach helps ensure AI content quality and factual accuracy from the outset, streamlining the verification workflow for busy businesses. By automating the initial research and fact-checking, Jottler allows human editors to focus on nuanced review and critical thinking, rather than spending time on rudimentary verification steps. Other tools, like Rankability, offer free AI detection with high accuracy rates across various LLMs, providing additional layers of checking.

Beyond detection, some AI tools are designed to assist with verification. For instance, platforms like Jottler, an autonomous AI SEO Agent, incorporate deep research and anti-hallucination fact-checking from 14+ sources directly into its content generation process. This proactive approach helps ensure AI content quality and factual accuracy from the outset, streamlining the verification workflow for busy businesses. By automating the initial research and fact-checking, Jottler allows human editors to focus on nuanced review and critical thinking, rather than spending time on rudimentary verification steps. Other tools, like Rankability, offer free AI detection with high accuracy rates across various LLMs, providing additional layers of checking.

How Jottler enhances verification:

- Integrates deep research from multiple sources.

- Features anti-hallucination fact-checking.

- Automates initial verification steps.

- Streamlines the overall content workflow.

- Frees up human editors for higher-level review.

Implement Multimodal AI for Comprehensive Checks

Consider tools like Truthscan, which offer multimodal AI detection for text, images, video, and voice, with 99%+ forensic level accuracy for deepfake detection. As content becomes increasingly complex and incorporates various media types, multimodal AI ensures that all elements are factually sound and authentic. This holistic approach is crucial in an era where cognitive manipulation and AI are expected to shape disinformation in 2026.

Benefits of multimodal AI checks:

- Detects inaccuracies across text, images, video, and voice.

- Offers forensic-level accuracy for deepfake detection.

- Provides a holistic approach to content authenticity.

- Crucial for combating disinformation in complex media.

- Ensures factual soundness across all content elements.

Establish Clear Editorial Guidelines and Workflows

Standardizing the content creation and review process is essential for consistent accuracy, especially when scaling AI content generation. This is critical as 78% of content marketing teams now use AI detection tools, necessitating clear internal protocols.

Develop Comprehensive Style Guides and Fact-Checking Protocols

Create detailed style guides that include specific instructions for factual accuracy, citation standards, and acceptable source types. Establish clear, step-by-step fact-checking protocols that outline who is responsible for each stage of verification, from initial AI output review to final editorial sign-off. This ensures consistency across all content, reducing the average industry false positive rate of 5-15%.

Elements of comprehensive style guides:

- Specific instructions for factual accuracy.

- Detailed citation standards.

- Guidelines for acceptable source types.

- Step-by-step fact-checking protocols.

- Clear roles for each stage of verification.

Define Roles and Responsibilities for AI Content

Clearly define the roles and responsibilities of human editors, fact-checkers, and AI operators. This includes outlining who is accountable for the accuracy of AI-generated content at each stage of the workflow. Without clear accountability, errors are more likely to slip through. This structured approach is vital for teams producing significantly more content with automated SEO content publishing systems.

Defined roles ensure:

- Clear accountability for content accuracy.

- Efficient workflow management.

- Reduced likelihood of errors.

- Seamless collaboration between human and AI.

- Optimized resource allocation.

"The industry is moving from 'vibes' to quantifiable bottom-line impact questions like 'how does this specific interaction impact the bottom line?'"

Implement Version Control and Audit Trails

Maintain rigorous version control for all content, tracking changes made by both AI and human editors. Implement audit trails to document the verification process, including sources checked and any corrections made. This transparency is crucial for accountability and for demonstrating compliance with regulatory requirements, such as the EU AI Act's labeling mandates.

Benefits of version control and audit trails:

- Tracks all changes made by AI and human editors.

- Documents the entire verification process.

- Ensures transparency and accountability.

- Facilitates compliance with regulatory requirements.

- Provides a historical record for future reference.

Continuously Monitor and Adapt Your Accuracy Protocols

The AI landscape is dynamic. What works today may not be sufficient tomorrow. Continuous monitoring and adaptation are key to long-term accuracy, especially with global AI spending projected to reach $2.5 trillion in 2026.

Track AI Performance and Hallucination Rates

Regularly monitor the performance of your AI models, paying close attention to hallucination rates and the types of inaccuracies produced. Use this data to fine-tune models, adjust prompting strategies, and identify areas where human oversight needs to be strengthened. This proactive approach helps reduce AI hallucinations, which occur frequently for leading AI chatbots on controversial topics.

Methods for tracking AI performance:

- Regularly analyze hallucination rates.

- Categorize types of inaccuracies.

- Gather feedback from human reviewers.

- A/B test different prompting strategies.

- Use performance metrics to fine-tune models.

Conduct Regular Audits and Quality Checks

Perform periodic audits of published AI-generated content to assess overall accuracy and adherence to editorial guidelines. These quality checks can uncover systemic issues or areas where your fact-checking protocols need improvement. Consider using AI SEO agents to help with this process, ensuring that your content aligns with entity-based SEO best practices.

"The AI governance market is expanding at a 45.3% compound annual growth rate, with Gartner projecting that 40% of enterprise applications will require governance by 2026."

Benefits of regular audits:

- Uncover systemic issues in fact-checking protocols.

- Assess overall accuracy of published content.

- Ensure adherence to editorial guidelines.

- Identify areas for process improvement.

- Maintain high content quality over time.

Stay Updated on AI Advancements and Regulatory Changes

The field of AI is constantly evolving. Stay informed about new AI models, fact-checking tools, and regulatory developments. Adapt your protocols and workflows to incorporate new best practices and comply with emerging laws, such as the EU AI Act or California's AB 853, which mandates AI developers to provide free AI-detection tools and embed latent disclosures. This ensures your automated SEO content publishing systems remain compliant and effective.

Staying updated involves:

- Monitoring new AI models and technologies.

- Researching emerging fact-checking tools.

- Tracking global and local regulatory changes.

- Adapting internal protocols and workflows.

- Ensuring continuous compliance and effectiveness.

Conclusion

Ensuring the factual accuracy of AI-generated content is not merely a best practice; it's a critical imperative for businesses in 2026 and beyond. By understanding AI's limitations, implementing multi-layered fact-checking, leveraging human expertise, utilizing specialized AI tools, establishing clear guidelines, and continuously adapting, organizations can harness the power of AI for content creation while safeguarding trust and reputation. The blend of advanced AI and diligent human oversight is the cornerstone of a successful content strategy, driving up to higher ROI compared to traditional methods and enabling Level 3 AI maturity teams to produce 5-10x more content at 75-85% lower cost per article compared to Level 1 teams. Embrace these strategies to not only mitigate risks but also to unlock the full potential of AI to compound organic traffic and achieve unparalleled content quality. Start your SEO agent today to build a robust, accurate, and high-performing content strategy.

FAQs

like this today.